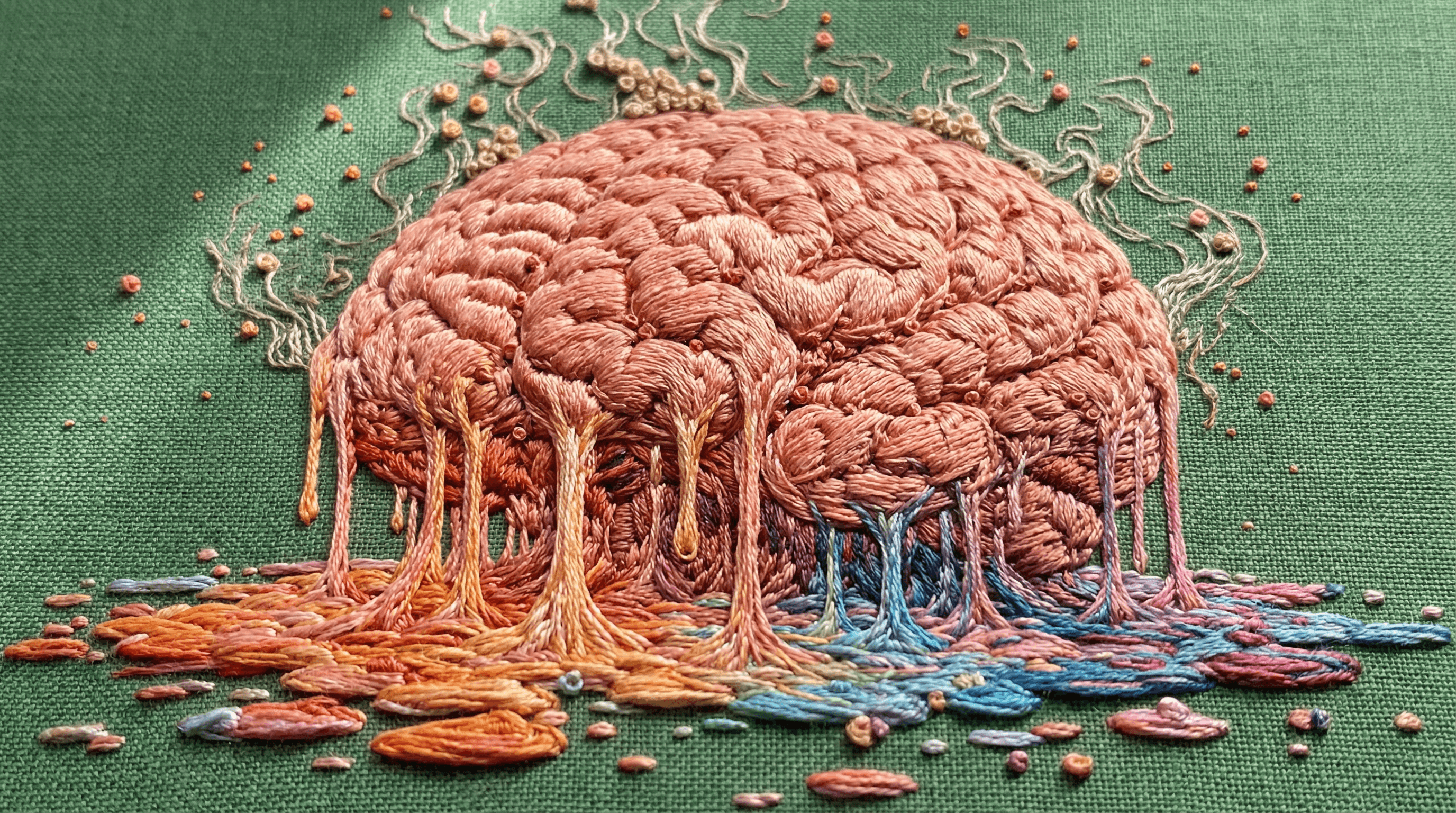

Is AI Melting Our Brains?

Be the first to know about every new letter.

No spam, unsubscribe anytime.

I understand why people say AI is melting our brains. That fear doesn’t come from nowhere. But I think it’s slightly misdiagnosed.

Let’s reframe it.

Think of it like shoveling a driveway.

Every winter, people clear snow differently.

Some shovel by hand: slow, physical, familiar. Some prepare ahead of time, lay down plastic, and use their strength more strategically. And some use tools that apply heat: snow blowers, salt, even industrial solutions designed to clear everything fast. Example

Efficient. Minimal effort. The driveway is clear in minutes.

But heat changes the job.

Melted snow turns into water, and if you don’t manage where that water goes, it refreezes into ice. That’s when driveways become dangerous, not because snow was removed, but because no one stayed responsible for what comes after.

Research on automation and cognition already shows this pattern: when tools reduce friction, people don’t stop thinking, they stop monitoring unless they’re trained to stay engaged. The risk isn’t ease. It’s disengagement after the output appears.

Judgment is the work that still belongs to us.

Judgment looks like:

deciding what inputs matter before you prompt

checking whether an output makes sense in context

knowing when speed is helpful and when it’s reckless

catching errors, bias, or shortcuts before they harden into decisions

You still have to skim the surface, redirect the flow, and make sure the result doesn’t freeze into something worse later.

The danger isn’t that the work got easier. It’s assuming the job is finished the moment the snow disappears.

My grandpa calls me “lazy” because I don’t remember directions like he does. He memorized every back road; I open my phone and go.

But I still decide where I’m going. When to leave. Whether speed, scenery, or certainty matters more. I’m still responsible for getting there. I just don’t pretend that memorizing the map is the point anymore.

He learned to navigate in a world that required memory.

I navigate in a world that requires judgment.

My brain didn’t melt. It recalibrated where it does the work.

That’s the real risk with AI. Not that it makes you less intelligent, but that you stop engaging your judgment once the answer shows up.

If you stay involved after the melt. Monitoring, adjusting, thinking past the output. AI doesn’t replace human intelligence.

It amplifies it.

We should ask whether our minds were shaped by working in the cold, and whether we’re prepared for what happens when everything starts to melt.

Let's chat again soon...

Gibz

My mission is to

Help you create and earn on your terms.

No spam, unsubscribe anytime.